Help me understand this better.

From what I have read online, since arm just licenses their ISA and each vendor’s CPU design can differ vastly from one another unlike x86 which is standard and only between amd and Intel. So the Linux support is hit or miss for arm CPUs and is dependent on vendor.

How is RISC-V better at this?. Now since it is open source, there may not be even some standard ISA like arm-v8. Isn’t it even fragmented and harder to support all different type CPUs?

each vendor’s CPU design can differ vastly from one another unlike x86 which is standard and only between amd and Intel.

The ISA guarantees that a program compiled for it can run on any of these vendor designs. For example native binaries for Android run on any SoC from any vendor with the ARM ISA compiled for. The situation is exactly the same as with x86, Intel and AMD. Their core designs are very different yet binaries compiled for x86 run on either Intel or AMD, and on any of their models, even across different architectures. E.g. a binary compiled for x86_64 would run on AMD Zen 2, as well as Intel Skylake, as well as AMD Bulldozer.

How is RISC-V better at this?

It’s better in that it’s free to use. Anyone making a chip implementing RISC-V doesn’t have to pay ARM or Intel for a license. Not that Intel sells them anyway.

The fragmentation issue might become a new problem. With that said we definitely want to move away from the only usable cores using ARM or x86, neither of which we can design and manufacture without the blessing of two corpos, one of which is a proven monopoly abuser.

How did AMD get the rights to build these CPUs? It is the only competitor it seems.

TL;DR: While Intel had their heads shoved up their ass making the Itanium architecture, AMD made a 64-bit variant of x86 that was backward compatible with the older x86 ISA. Technology moved on, and amd64 was adopted while Intel kept trying and failing to push their binary-incompatible architecture.

Eventually, Intel had to give up and adopt AMD’s amd64 ISA. In exchange for letting them use it, Intel lets AMD use the older x86 ISA.

AMD were already using the x86 ISA long before amd64.

https://en.m.wikipedia.org/wiki/AMD

Intel had introduced the first x86 microprocessors in 1978.[51] In 1981, IBM created its PC, and wanted Intel’s x86 processors, but only under the condition that Intel also provide a second-source manufacturer for its patented x86 microprocessors.[12] Intel and AMD entered into a 10-year technology exchange agreement

AMD were also second source for some other Intel logic chips before that deal.

I was only going for explaining why AMD still continues to have the license to the x86 instruction set in modern times, but I appreciate the added historical context to explain to others how they originally had the rights to use it.

Itanium also failed miserably in performance and everything else it set out to deliver. While being ridiculously expensive.

Crazy!

VIA also built x86 CPUs for some time, they have a license as well; the issue with modern x86_64 is though that basically, you need licenses from both AMD and Intel. They do have a cross-license agreement, but there’s no single point of contact for all licenses for a modern x86 CPU.

To sell into the US government in the 70s you had to ensure your parts could be sourced from a second company. That way, if you had supply problems, the government would just got to the second source.

AMD was a second source for the 8086.

So way back in what? the late 70’s or early 80’s, IBM decided they wanted to get into the microcomputer business. They didn’t want to throw a lot of money at it developing it in-house, so they slapped together a machine from off-the-shelf components to include an Intel CPU, after failing to get the attention of the guy who wrote CP/M they hired some little nobody software house called Microsoft to do the operating system which they licensed on a non-exclusive basis, and figured the copyright on their firmware (the BIOS) would keep the system proprietary. It didn’t. Compaq created and sold a compatible but non-infringing BIOS, which meant IBM had no legal standing to prevent anyone from building or selling machines 100% compatible with their line of PCs.

IBM had accidentally created an open standard, which wasn’t so good for IBM but great for customers. You could price shop. There was a certain security in “if this vendor quits, I can go with another vendor and keep my software and peripherals.” There were competitors to Microsoft’s MS-DOS even before Linux, you could get disk drives and such from multiple companies…only the CPU was truly proprietary to one company.

So as big businesses and governments started adopting these things and paying BIG bucks investing in computer infrastructure, hardware, software, personnel training etc. a lot of bigwigs started worrying about Intel’s future. What if this company goes bust, has a fire at a factory, puts out two whole generations of products that destroy themselves or whatever. Will that pull a rug out from under us? So Intel had to give AMD a license to manufacture x86 chips as a second source.

Add in a mention of Syrix here, a little company that sprung up also manufacturing x86 chips around the Pentium era who didn’t have a license from Intel, they reverse engineered and then sold non-infringing compatible CPUs, so there was briefly a third horse in that race.

AMD has served several different roles in the space; they’ve sold identical copies of Intel chips to the point they had both the Intel and AMD logo on them, they sold low-tier budget options, and on occasion they’ve actually out-done Intel at their own game.

They reverse-engineered themEdit: Huh, apparently I misremembered

RISC-V is better for Linux due to driver support. Vendors making hardware are more likely to use RISK-V for their controllers due to the costs. Modern computers are putting more functions under control of kernels that run on proprietary compute. (There exists a chart showing how little the Linux kernel directly controls.) As more of those devices run RISC-V, they will become more discoverable.

Also, those that can design or program tge devices will have more transferrable skills. Leading to the best designs spreading, and all designs improving.Places in a computer with compute (non-exhaustive, not all candidates for RISC-V):

BMC

Soundcard (or subsystem on mainboard)

Video card (GPU and the controller for the GPU)

Storage drives

Networking

Drive interface controlling card

Mainboard (not BMC)

Keyboard

Mouse

Monitor

UPS

PrinterWill it be perfect? Nope.

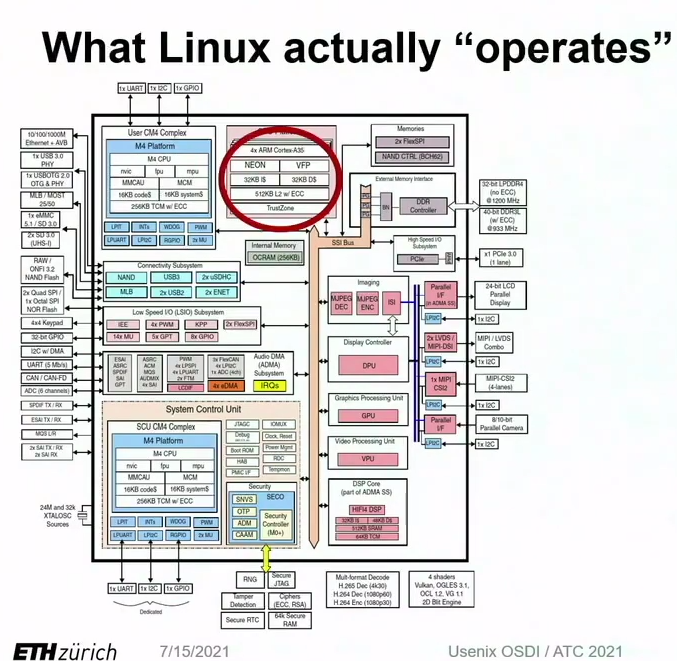

A lot of the vendors will lock things up as well.There exists a chart showing how little the Linux kernel directly controls

I’d be greatly interested in seeing this chart

USENIX ATC '21/OSDI '21 Joint Keynote Address-It’s Time for Operating Systems to Rediscover Hardware

Timothy Roscoe, ETH Zurich

At 19:22

examples he gives are what you’d expect:

- Linux doesnt control the bootloader

- Linux doesn’t control power management

Many systems on the chip that Linux doesn’t have control over, and could be compromised by a cross SoC attack

Thank you for pulling the image out.

This talk surprised me at the time. I was starting the eye opening experience of design hardware. Linux more orchestrates the hardware than controlling it.

For me it opened my eyes to the idea that all you really need is some CPU time and a little RAM space to have a full-fledged performative system. Sure, there will be a large attack vector for remote spying, but if you just want to code and play games then it’s pretty amazing how little you need :-)

Same here

To avoid convo in multiple places, it is in reply to message you replied to.

Thanks!

There’s also the fact that Arm doesn’t really work with arbitrary PC style hardware. Unless this got fixed (and there have been some pushes) you have to pretty much hard code the device configuration so you can’t just (for example) pull a failed graphics card and swap a new one and expect the computer to boot. This isn’t a problem for phone (or to an extent: laptop) makers because they’re happy to hard code that info. For a desktop, though, there’s a different expectation.

RiscV does support this, i believe, so in that sense it fits the PC model better.

I don’t follow. Isn’t the OS’s job to discover hardware? How is the CPU instruction architecture come into play here?

See the start of this post talking about device tree models vs boot time hardware discovery.

There’s no reason an arm chip/device couldn’t support hardware discovery, but by and large they don’t for a variety of reasons that can mostly be boiled down to “they don’t want to”. There’s nothing about RISC-V that makes it intrinsically more suited to “PC style” hardware detection but the fact that it’s open hardware (instead of Apple and Qualcomm’s extremely locked down proprietary nonsense) means it’ll probably happen a lot sooner.

Thank you, that was very elucidating.

Better for Linux? I’m not sure I would say it is. Better for the world in general? When you compare things like power consumption, you can definitely see that in some use cases (the average user), ARM is superior. But for Linux? Maybe by default owing to the fact that it’s more modern. As for RISC-V, the core is open source and “all” the extensions are proprietary, so it’s not as open source as it pretends to be. But it’s definitely better than what we’re currently accustomed to as mainstream.

Shortest answer is that RISC-V is Open Source and ARM is proprietary but what does that mean?

First, it means “freedom” for chip makers. They can do what they want, not what they are licensed to do. There are a lot of implications to this so I will not get into all of them but it is a big deal. There is even a standard way of adding ISA extensions. It makes RISC-V an interesting choice for custom chip makers trying to position themselves as a platform ( think high-end, specialty products ).

If you are a country or an economy that is being hit with trade restrictions from the Western world ( eg. China ), then the “freedom” of RISC-V also provides you away around potentially being denied access to ARM.

For chip makers, the second big benefit is that RISC-V is “free” as in beer—no licenses. For chips in laptops or servers, the ISA license is a small part of the expense per unit. But for really high-volume, low-cost use cases, it matters.

In the ARM universe, middle of the market chip makers license their designs off of ARM ( not just the ISA ). This is why you can buy “Cortex” CPUs from multiple suppliers. Nobody else can really occupy this space other than ARM. In the RISC-V universe, you can compete with ARM not just selling chips but by licensing cores to others. So, you get players like StarV and MilkV that again want to be platforms.

So, RISC-V is positioned well across the spectrum—somewhat uniquely so. This makes it an excellent bet for building software and / or expertise.

It is only the ISA that is Open Source though. Unlike some of the other answers here imply, any given RISC-V chip is not required to be any more open than ARM. The chip design itself can be completely proprietary. The drivers can be proprietary. There is no requirement for Linux support, etc. That said, the “culture” of RISC-V is shaping up to be more open than ARM.

Perhaps because they want to be platforms, or perhaps because RISC-V is the underdog, RISC-V companies are putting more work into the software for example. RISC-V chip makers seem more likely to provide a working Linux disto for example and to be working on getting hardware support into the mainline kernel. There is a lot of support in the RISC-V world for standards for things like firmware and booting.

With the exception of RaspberryPi, the ARM world is a lot more fractured than RISC-V. You see this in the non-Pi SBC world for example. That said, if we do consider just RaspberryPi, things are more unified there than in RISC-V.

Overall, RISC-V may be maturing more quickly than ARM did but it is still less mature overall. This is most evident in performance. Nobody is going to be challenging Apple Silicon or Qualcomm X Elite with their RISC-V chips just yet. Not even the Pi is really at risk.

Eventually, we will also just get completely Open Souce designs that anybody can implement. These could be University research. They could be state funded. They could be corporately donated designs ( older generations maybe ). Once that starts to happen, all the magical things that happened for Open Source software will happen for RISC V as well.

Open Source puts a real wind at RISC-V’s back though. And no other platform makes as much sense from the very big to the very small.

Thank you for the great write-up. Man, I sometimes regret not having studies computer science. I am really invested in open source and net neutrality etc., but I can’t contribute shit so I’m restricted to a mere consumer.

I’m just happy that my garuda linux just keeps working most of the time becaus I couldn’t code anything if my devices would fail.

I can’t contribute shit so I’m restricted to a mere consumer

That’s not true! Contributing documentation improvements is critically important for the growth and health of the open-source community but is very overlooked.

I got graduate and masters in the hardware area but learned all of those things about arm and riscV by myself. I only got to see mips and hypothetical ISA in my courses. Heck riscV and rpi didn’t even exist.

All you need is interest and time.

Yes. Less blobs than ARM. I’m not even sure if some RISC-V have any blobs – someone correct me if I’m wrong.

Also getting cockblocked by proprietaryisms is bullshit. HDMI is a Circle Jerk of capitalism controlling competition and the market for self preservation.

Please somebody correct me if I’m wrong, but I really don’t find the “chip makers don’t have to pay licence fees” a compelling argument that RISC-V is good for the consumer. Theres only a few foundries capable of making CPUs, and the desktop market seems incredibly hard to break into.

I imagine it’s likely that the cost of ISA licencing isn’t what’s holding back competition in the CPU space, but rather its a good old fashioned duopoly combined with a generally high cost of entry.

Of course, more options is better IMO, and the Linux community’s focus on FOSS should make hopping architectures much easier than on Windows or MacOS. But I’d be surprised if we see a laptop/desktop CPU based on RISC-V competing with current options anytime soon.

Efficiency too. I think RISC-V is mostly not made by these companies, which is why their efficiency is below x86_64 and performance is below an Intel Core 2 Duo

So RISC-V is less performant and less efficient? That’s opposite to what I heard about it, at least for the efficiency.

Compared to ARM, so makes total sense.

Lower ISA license fees do nothing to help desktop users directly. The fact that “anybody can make one” is what will help. The competition and innovation that RISC-V will drive will eventually be a massive boon to end-users.

It is not hard to “make your own chips” anymore. The barrier to entry has dropped tremendously. Apple has their own chips. Google has their own chips. Samsung has their own chips. Microsoft even has their own chips. Except none of them actually “make” processors. They are all fabricated ( manufactured ) by TSMC in Taiwan. Only Intel really makes its own chips and even they do not make all of them. TSMC would fabricate chips for you too if you placed a big enough order.

It is less about the cost of the license and more about being able to license it at all. Only AMD and Intel can design chips implementing the x86-64 ISA. ARM is not much better. Only huge customers can get a license to create novel ARM chips. Most ARM customers are licensing core designs off of ARM themselves ( eg. Cortex

Anybody can legally design a RISC-V CPU—even you! So, if you are a company or country that cannot get access to x86 or even ARM ( like Alibaba / China ) then RISC-V is your answer. Or if you have an innovative idea for a chip, RISC-V is for you. And since it is Open Source, RISC-V allows you to collaborate on designs or even give them away:

https://github.com/OpenXiangShan/XiangShan

The “desktop” market is incredibly hard to break into but that is because the “desktop” market is more about the operating system and its applications.

Apple can move to whatever ISA they want as they control both the hardware and the operating system. They have migrated several times, most recently to ARM with “Apple Silicon”.

Microsoft would love to move to ARM to compete with the power efficiency of Apple Silicon. Outside of gaming, the “desktop” market these days is laptops where things like battery life really matter. Microsoft has failed a few times though because of the application side. The recent push with Qualcomm X Elite looks the most promising but time will tell.

Only Microsoft and Apple matter on the desktop.

I use desktop Linux but Linux is less than 5% of the desktop market. That is a shame because Linux, with its vast ecosystem of Open Source applications, is a lot easier to port to new architectures.

You can run a RISC-V Linux desktop today. It will be super slow but you can do it.

We need RISC-V to get faster and more power efficient. Thankfully, there is competition.

There‘s literally a video from jeff gierling on the riscv community posted very shortly after your question, maybe that helps.

Here’s the video:

https://youtu.be/YxtFctEsHy0?feature=shared

Also, maybe ask in the riscv community. They‘ll probably have more insight.

Actually, I think RISC-V is even worse than ARM. With ARM, at least you have a quite reliable instruction set on the CPU. With RISC-V, most vendors have their own extensions of the instruction set, which opens a big can of worms: Either you compile all your stuff for your own CPU, or you have a set of executables for each and every vendors flavor of RISC-V commands, or you exclusively use the RISC-V core commands. The first would be only for hardcore geeks, the second would be a nightmare to maintain, and the third would be not really efficient. Either way, it sucks.

The same was true for x86, Intel had SSE and AMD had 3DNow, programs just provided different codepaths per available feature (this is where Intel pulled some dirty tricks with their ICC). It’s not that big of a problem.

Yes, but those were only two distict flavors, and both had a lot of pull. And those special instructions were only needed in special applications and drivers. With RISC-V we are talking about a dozen different flavors, all by small and mostly insignificant players and the commands that extend the basic command set are commands for quite common operations. Which is a totally different scenarion than the SSE/3DNow issue back then.

Some extensions won’t matter in the slightest, especially concerning controllers that use the instruction set. For the vendors selling general purpose CPUs, we’ll see how it shakes out. It’s in their interest to retain compatibility, so I suppose it’ll be similar to how it’s handled for Vulkan: vendors having their own extensions that at one point get merged into a common de facto standard for general purpose computing or something.

i’ve had the same thought lately. the common arm design approach around the bootloader seems to turn old Android phones and tablets into e-waste sooner than necessary, in theory they could all run Linux and be useful for another ten years. but it’s hard enough to port mainline Linux to Android devices, and almost impossible to get all the included hardware working properly

I don’t think there has been huge issues with incompatible ISAs on ARM. If you’d use NEON extensions, for example, you might have a C-implementation that does the same if the extensions are not available. Most people don’t handwrite such code, but those that do usually go the extra mile. ARM SoCs usually have closed source drivers that cause headaches. As well as no standardized way of booting.

I haven’t delved super-deep into RISC-V just yet, but as I understand these systems will do UEFI, solving the bootloader headache. And yes, there are optional extensions and you can even make your own. But the architecture takes height for implementing an those extensions in software. If you don’t have the gates for your fancy vector instruction, you can provide instructions to replicate the same. It’ll be slower on your hardware, but it’ll be compatible if done right.

bc arm is not free

deleted by creator